Optimizing Your Website for SEO in 2013

Google algorithms have always been something of a mystery, but for many webmasters they have become a nightmare. Over the last year, keyword searches have become erratic, understanding how to improve page rank is more confusing than ever and the search engine giant is penalizing online businesses for all sorts of ungamely reasons.

Google algorithms have always been something of a mystery, but for many webmasters they have become a nightmare. Over the last year, keyword searches have become erratic, understanding how to improve page rank is more confusing than ever and the search engine giant is penalizing online businesses for all sorts of ungamely reasons.

Two recent surveys conducted independently of each other have shed some light on how Google algorithms measure the performance of a website and rank it accordingly. For the most part, the results told us many things we already know, but also revealed a shift in factors that produce the best results.

The first study conducted by BusinessBolts.com analyzed 100 randomly selected keywords and assessed them against the top five websites that appeared in Google for each search. A second survey conducted by Dr. Peter J Meyers took 10,000 B2B keywords and split them across 20 categories. Although the search results were predictably erratic, significant patterns were found in respect of keywords, word count, title tags, back links and local searches.

Title Tags, Keywords and Word Count

It seemed at one point that Google was ignoring title tags and some SEO specialists still discredit the need for updating your meta-fields. The latest surveys suggest your choice of keywords in your title tags is one of the most important aspects of your website architecture. Given these tags are used by search engines to index your site, increase visibility and encourage web users to click it stands to reason that they are needed.

The overall use of keywords on the other hand is not as important as it used to be. At one time search engines invested substantial trust in keywords and specified they should be placed in strategic positions on a webpage so the page could be correctly classified. The latest tests revealed that the significance of keywords has been reduced to the main heading and one or two of the sub-headings depending on the length of your content.

Speaking of content, Google seem to favor a word count of around 900 words. This figure fits into standard copy writing philosophy that long copy proves to be more successful than short copy. Therefore your Homepage, services and product pages will fair better with both search engines and prospects if they are longer.

Backlinks

Link building is perhaps the most troublesome SEO tactic at present. We know that inbound links carry a lot of weight with Google and the results published in the respective surveys confirm this – the problem is, knowing which inbound links will be accepted as legitimate.

Search engine algorithms do not allow inbound links that manipulate the page results and have limited link building exercises to genuine referrals from third party web owners, social media users or contributing to reputable industry magazines. There is a question mark over whether links embedded into articles from third party blogs will continue to hold any sway moving into 2013. For the time being, it’s probably best to forget that idea.

What was significant, although not entirely unexpected, was the importance of likes, shares and +1′s on various social media networks. It is apparent that Google discounts any links posted in the linked account of the business owner’s account, but does weigh up the number of people sharing the link or clicking the “Like” in Facebook.

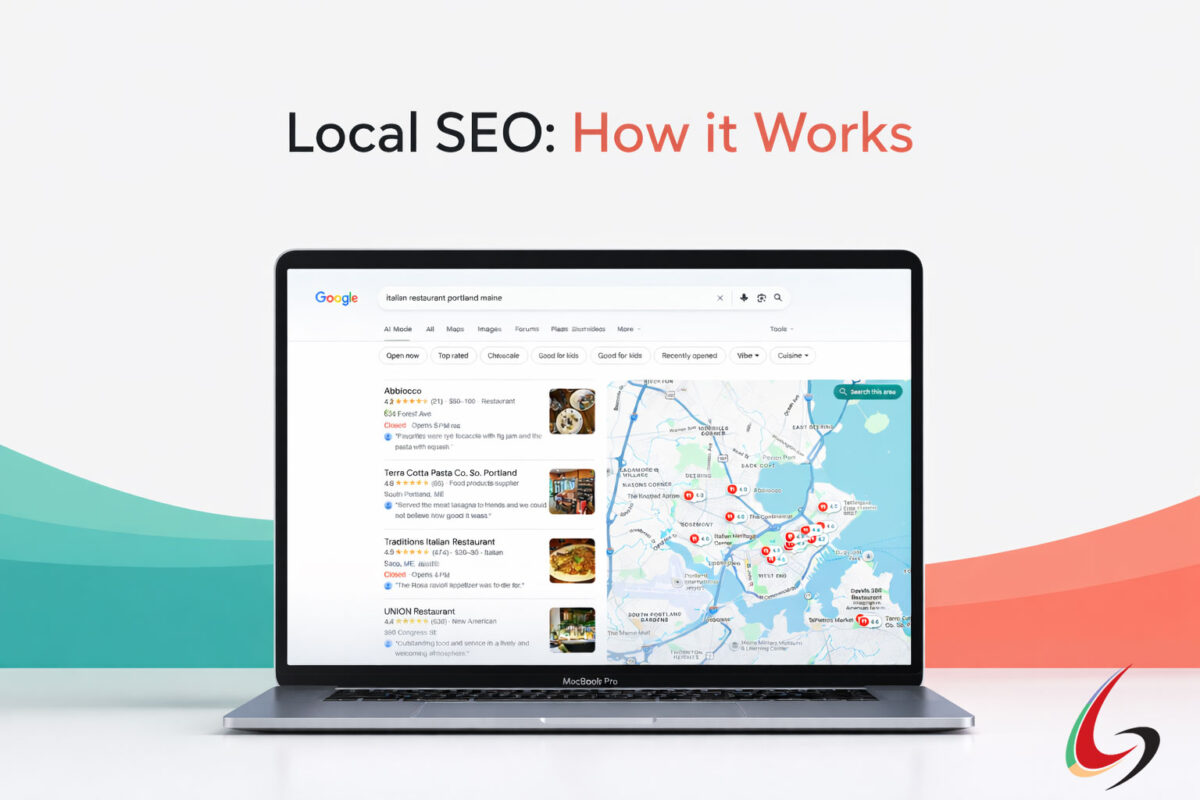

Another aspect that is given preference in Google search is locality. We have known for some time now that local searches are given priority in search engine listings when a specific area is typed in as part of the search term, but it is surprising how many web owners have not updated their contact details or included their locality on the Homepage to take advantage of this.

Author Rank

It didn’t surface in the latest round of search tests, but you can add rel=author to the list of SEO tactics you should be employing in 2013. Author rank was launched last June and is expected to give websites with a recognized author a better page rank than sites that do not have a verified author.

In his book, “The New Digital Age,” Google’s Executive Chairman, Eric Schmidt writes: “…information tied to verified online profiles will be ranked higher than content without such verification… The true cost of remaining anonymous, then, might be irrelevance.”

The Author Rank is a strategy Google hopes to develop to ensure the content of a website has the quality they intend to deliver to the end user. Rather than trusting backlinks that can be easily manipulated, the search engine giant will rely on websites that use writers with a rank authority. Websites that are set up with a ranking author are clearly identified in search engine results by a photograph of the writer next to the search result.

It may take Google a while to collect enough data on authors before they can determine the level of ranking authority they have, but the earlier you set your site up with Author Rank status, the better position you will hold once the rel=author stats kick-in. Matt Cutts, chief spokesman for Google, says: “…over time, as we start to learn more about who the high quality authors are, you could imagine that starting to affect rankings.”

Setting Up Author Rank

To set up Author Rank you will need a Google+ Account. In the About section of your profile, there is a space titled “Contributor to” which needs to be updated with the URL of your website or any other site you have contributed content for. You then need to send your profile code – which looks like this – to the owner of the website you contribute to and ask him to paste it in the relevant plug-in of their content management system.

It may be the case that Google algorithms change continuously over the next couple of years until the search engine giant figures out the strategies to achieve its ultimate objective – delivering quality content to its ends users. Google is not out to penalize small businesses and promote large corporations like many people believe, but they do expect online business owners to maintain their websites and prove they can deliver the quality end-users want.