Google’s New Search Feature Lets You Take a Photo of Something, Then Ask Questions About It

I think it’s kind of nice when a stranger compliments you on that jacket and asks where you got it. But that might happen less and less after this . . .

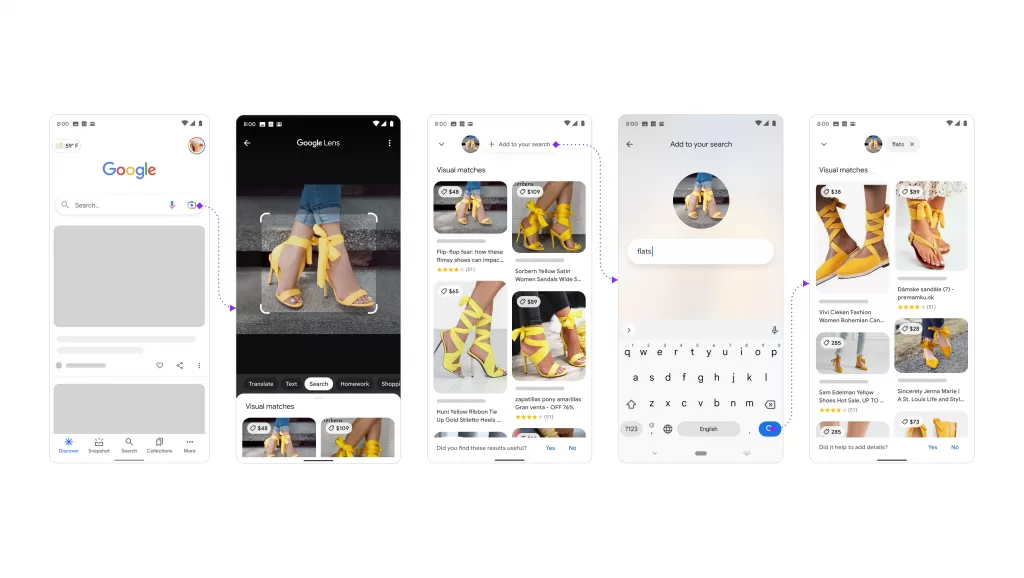

Google is going to start letting you search for stuff by taking a photo, and then adding text to zero in on exactly what you’re looking for.

They’re beta-testing a feature called Google Lens Multisearch that uses A.I. to figure out what you took a photo of, and then answer questions about it.

For now, they’re focused on using it for shopping. So instead of asking someone where they got their jacket, you could take a picture from across the room . . . add a phrase like “where to buy” . . . and it’ll tell you.

They think it could have wider applications though. For example, you could eventually take a picture of something that’s broken and type “how to fix.” They say it can already do that with broken bicycles.

It won’t work with everything, just like your voice assistant is still kind of dumb. But the A.I. is getting better and better.

They’re rolling it out on the Google app for iPhones and Androids, but it’s not available for everyone yet.’

(Here’s a visual of how you could take a picture of high heels, add text, and find flats that look similar.)